AI-generated fake videos (or deep fakes) are becoming more common and convincing. These videos have become one of the key weapons used in propaganda battles for quite some time now.

Context

AI-generated fake videos (or deep fakes) are becoming more common and convincing. These videos have become one of the key weapons used in propaganda battles for quite some time now.

Background

- Deep Fakes constitute fake multi-media content — often in the form of videos but also other media formats such as pictures or audio — created using powerful artificial intelligence tools.

- Deep Fake makes it possible to synthesize media — switch faces, lip-syncing, and puppeteers — mostly without consent. This creates a threat to internal security, political stability, and business disruption in a nation.

- Currently, most social media companies such as Twitter and Facebook have banned deepfake videos. They have told that if they detect any video as deep fake manipulated or synthetically generated, it will be taken down.

- Today, as technology advances,

- it is becoming increasingly easier for anyone to produce deep fakes and

- deep fakes are becoming harder to detect using traditional techniques

In this article, we will therefore first understand deep fakes and then the various challenges they pose for our society and ways to address them.

Analysis

What is deep fake?

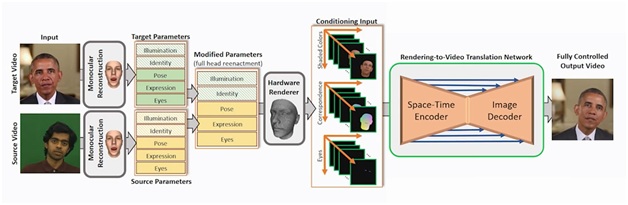

- Deep Fakes are called so because they use deep learning technology, a branch of Artificial intelligence that applies neural network simulation to large data sets, to create fake videos.

- Using this technology, a person’s head movements and expressions, etc are transferred onto some other person’s video in such a way that it becomes difficult to tell that it is a deep fake unless one closely observes the source media file.

- Here the AI learns what a source face looks like and then transposes it onto another target to perform a face swap seamlessly.

An illustration of how a Deep fake video is created

How are deepfakes detected currently?

- Currently, deep fakes are identified manually or by software, using some identifiers like:

- Flicking, blur with bleeding color, etc. in poorly produced deep fake videos

- Unusual eye blinking pattern in deep fake videos

- Using markers known as “soft biometrics” of a person i.e., his/her eyebrow movements, lip movements, etc.

Why are deep fakes becoming harder to detect?

- Newer and more advanced deep fakes use a machine learning technique called generative adversarial network, or GAN

- This type of machine learning system consists of two neural networks, operating in concert. One network generates the fake and the other tries to detect it, with the content iterating back and forth, and improving with each iteration (repetition of the process).

- This dynamic is replicated in the wider research landscape, where each new deep fake detection technique gives the deep fake makers a new challenge to overcome, making deep fakes increasingly foolproof.

- Thus, any deep fakes detector will only be going to work for a short while before deep fake makers account for it in their algorithm.

What are the threats posed by deep fakes?

- Can lead to a new type of Warfare: A deepfake can be used as a powerful tool by a nation-state or on-state actors to undermine public safety and create uncertainty and chaos in the target country.

- Can undermine Democracy: Deep Fakes can be used to power false information about public policy, institutions, and politicians which can be exploited to change stories and manipulate beliefs.

- A high-quality deepfake can create false information that can cast a shadow on the legitimacy of the voting process and election results.

- Deep fakes can become an effective tool to induce polarization, amplify division in society, and suppress dissent.

- Can be used for targeting women: The malignant use of a deepfake can be seen in pornography, inflicting emotional, reputational, and in some cases, violence towards the individual. Women can be disproportionately affected.

- Can cause damage to personal reputation: Deepfake can depict a person indulging in antisocial behaviors and saying vile things. Even when the deep fake is debunked, it may be too late to remedy the initial harm caused to the victim.

- Can be used for financial and other frauds: Deepfakes can be deployed to extract money, confidential information, or exact favors from individuals.

How to counter deep fakes?

- Technological Interventions: Technical countermeasures used to mitigate the impact of deep fakes fall into three categories: media authentication, media provenance, and deepfake detection.

- Media Authentication includes solutions that help prove integrity across the media lifecycle by watermarking, media verification markers, signatures, and chain-of-custody logging

- Media provenance includes providing information on media origin, either in the media itself or as metadata of the media.

- Deepfake detection includes solutions that leverage various detection techniques to determine whether target media has been manipulated or synthetically generated

- Increased public awareness and behavioral change in society: Media literacy for consumers and journalists is the most effective tool to combat disinformation and deep fakes. Also, there is a need to take the responsibility to be a critical consumer of media on the Internet, think, and pause before sharing on social media.

- Proper Regulation: Effective regulations involving a discussion with the tech industry, civil society, academicians and policymakers can help to disincentivize the creation and distribution of malicious deep fakes.

Conclusion

In today’s world of the internet and social media, infodemic and its associated problems of fake news and disinformation have become a major challenge for the internal as well as external security of nations. Deep fakes only add to these challenges. An important step, therefore, would be to increase public awareness of the possibilities and dangers of deep fakes. Informed citizens will act as a strong defense against misinformation and a national security threat.