25th April 2022 (7 Topics)

Context

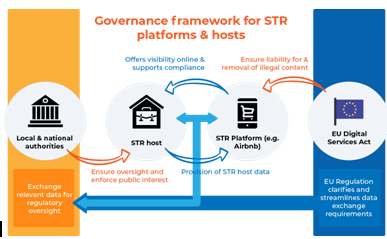

The European Parliament and European Union (EU) Member States recently announced that they had reached a political agreement on the Digital Services Act (DSA).

About

About the legislation:

- Digital Services Act (DSA) is a landmark legislation to force big Internet companies to act against disinformation and illegal and harmful content, and to “provide better protection for Internet users and their fundamental rights”.

- The Act was proposed by the EU Commission (anti-trust) in December 2020.

- As defined by the EU Commission, the DSA is “a set of common rules on intermediaries’ obligations and accountability across the single market”, and ensures higher protection to all EU users, irrespective of their country.

- The proposed Act will work in conjunction with the EU’s Digital Markets Act (DMA).

- The DSA will tightly regulate the way intermediaries, especially large platforms such as Google, Facebook, and YouTube, function when it comes to moderating user content.

- Instead of letting platforms decide how to deal with abusive or illegal content, the DSA will lay down specific rules and obligations for these companies to follow.

- According to the EU, DSA will apply to a “large category of online services, from simple websites to Internet infrastructure services and online platforms.”

- The obligations for each of these will differ according to their size and role.

- The legislation brings in its ambit platforms that provide Internet access, domain name registrars, hosting services such as cloud computing and web-hosting services.

- But more importantly, very large online platforms (VLOPs) and very large online search engines (VLOSEs) will face “more stringent requirements.”

What do the new rules state?

- A wide range of proposals seeks to ensure that the negative social impact arising from many of the practices followed by the Internet giants is minimised or removed.

- Online platforms and intermediaries such as Facebook, Google, YouTube, etc will have to add “new procedures for faster removal” of content deemed illegal or harmful.

- Marketplaces such as Amazon will have to “impose a duty of care” on sellers who are using their platform to sell products online.

- They will have to “collect and display information on the products and services sold in order to ensure that consumers are properly informed.”

- The DSA adds “an obligation for very large digital platforms and services to analyse systemic risks they create and to carry out risk reduction analysis”.

- The Act proposes to allow independent vetted researchers to have access to public data from these platforms to carry out studies to understand these risks better.

- The DSA proposes to ban ‘Dark Patterns’ or “misleading interfaces” that are designed to trick users into doing something that they would not agree to otherwise.

- The DSA incorporates a new crisis mechanism clause — it refers to the Russia-Ukraine conflict — which will be “activated by the Commission on the recommendation of the board of national Digital Services Coordinators”.

- However, these special measures will only be in place for three months.

- The law proposes stronger protection for minors, and aims to ban targeted advertising for them based on their personal data.

- It also proposes “transparency measures for online platforms on a variety of issues, including on the algorithms used for recommending content or products to users”.

Does this mean that social media platforms will now be liable for any unlawful content?

- It has been clarified that the platforms and other intermediaries will not be liable for the unlawful behaviour of users. So, they still have ‘safe harbour’ in some sense.

- However, if the platforms are “aware of illegal acts and fail to remove them,” they will be liable for this user behaviour. Small platforms, which remove any illegal content they detect, will not be liable.

India’s IT Rules:

- India’s IT Rules announced last year make the social media intermediary and its executives liable if the company fails to carry out due diligence.

- Rule 4 (a) states that significant social media intermediaries — such as Facebook or Google — must appoint a chief compliance officer (CCO), who could be booked if a tweet or post that violates local laws is not removed within the stipulated period.

- India’s Rules also introduce the need to publish a monthly compliance report.

- They include a clause on the need to trace the originator of a message — this provision has been challenged by WhatsApp in Delhi High Court.